A website content audit is a systematic evaluation of every piece of content on your site, assessing what’s driving results, what’s underperforming, and what’s actively working against you. It is one of the highest-leverage activities in SEO, and most teams either skip it entirely or do it wrong.

Most content audit guides stop at “create a spreadsheet and decide what to keep.” This one goes further. We are going to cover AI search visibility, generative engine optimization, and how to actually measure the business impact of the work after you execute it.

Let’s get into it.

What Is a Website Content Audit (and How Does It Differ from a Content Inventory)?

A content inventory is data collection. You are pulling a list of every URL on your site along with basic metadata, title, publish date, word count, content type. It tells you what exists.

A content audit is evaluation and decision-making. You take that inventory and layer in performance data, quality assessment, and strategic judgment to determine what each piece of content should do next.

The inventory is the raw material. The audit is the work. If you would rather have an expert handle this process for your site, take a look at my AI SEO audit service.

Done well, a content audit produces measurable results across several fronts. You will see improved organic rankings as thin and duplicate content gets cleaned up. You will see better traffic quality as content gets realigned to actual buyer intent. You will reduce the content bloat that silently drains crawl budget and dilutes your site’s authority. And increasingly, a well-executed audit will improve your visibility in AI Overviews, ChatGPT, and Perplexity, platforms that are now a meaningful part of how buyers research before they ever contact a vendor.

When Should You Run a Content Audit?

The honest answer is that you should be running content audits on a quarterly or half year cadence, not just when something breaks. That said, there are specific triggers that should push you to run one immediately:

- A noticeable decline in organic traffic, even if rankings haven’t moved dramatically

- A Google core algorithm update that hit your site’s visibility

- A site redesign or platform migration on the horizon

- A sudden drop in AI Overview citations or featured snippet ownership

- Keyword cannibalization issues surfacing in Ahrefs, multiple pages competing for the same terms

- A content library that has grown without a clear strategy behind it

Proactive auditing beats reactive auditing every time. By the time a traffic decline shows up in GA4, you are already months behind. Teams that audit on a set schedule catch problems before they compound.

Step 1: Define Your Audit Goals and Success Criteria

This is the step most teams skip, and it is the reason most audits produce a spreadsheet that nobody acts on.

Your audit goals determine everything, which pages you prioritize, which metrics you pull, and how you define success at the end. Without them, you are collecting data for the sake of collecting data.

Here is what good audit goals look like in practice:

- Increase organic traffic to product and solution pages by 30% over the next six months

- Reduce the number of zero-traffic pages by 40% through consolidation and pruning

- Improve average ranking position for target keywords from position 14 to position 7

- Increase the number of pages cited in AI Overviews and ChatGPT responses by 25%

Notice that each of those is specific and measurable. “Improve our content” is not a goal. “Increase organic RFI conversions by 20% over the next quarter” is.

I would also recommend connecting your audit objectives directly to business OKRs, not just SEO metrics. When you can show leadership that a content audit contributed to a 14% increase in qualified leads from organic search, the work gets resourced properly. When you frame it as an SEO exercise, it gets deprioritized.

Step 2: Build Your Content Inventory

Before you can evaluate anything, you need a complete picture of what’s on your site. There are four reliable ways to build and enhance your inventory:

- CMS export: Most platforms, WordPress, HubSpot, Webflow, allow you to export a full list of published URLs. This is the fastest starting point.

- XML sitemap: Pull your sitemap and use it as the foundation for your inventory. Keep in mind that sitemaps sometimes exclude pages that should be included, so treat this as a starting point, not a complete list.

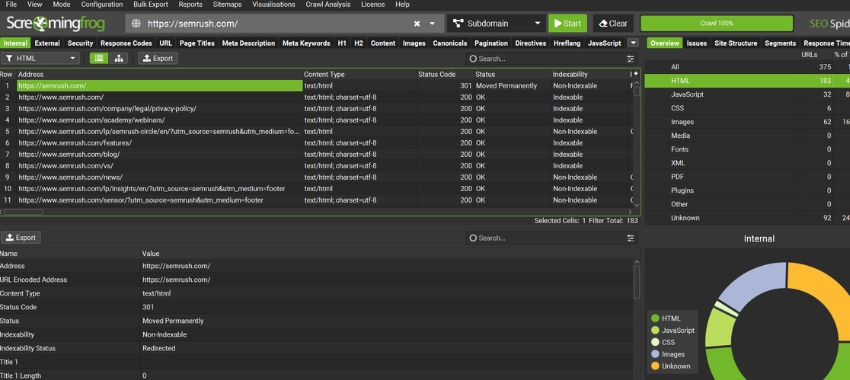

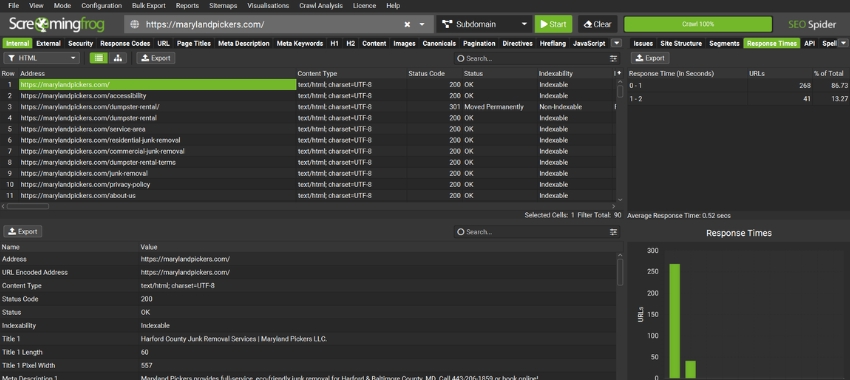

- Screaming Frog crawl: This is the most thorough method. Screaming Frog will crawl every accessible URL on your site and return a full export with status codes, metadata, word count, and more. For any site above a few hundred pages, this is the approach I recommend.

- Google Analytics: Go to your Google Analytics account, head to Reports → Engagement → Landing Page. Set your date range to the last six months and filter by Session Default Channel Group matching Organic Search. That isolates your organic traffic from everything else and gives you a clean view of every page on your website that had an organic visitor over the last six months.

For each URL in your inventory, capture the following data at this point of your content auidt:

- Page title and URL

- Content type (blog post, landing page, product page, resource, etc.)

- Publish date and last updated date

- Word count

- Meta description

- Structured data/schema markup

- Unique Inlinks and outlinks

- Canonical tags

- H1, and H2 tags

- Response codes

For large sites, use a phased approach. Trying to audit 10,000 pages at once is how audits stall and never get finished. Phase 1 covers your highest-traffic subfolders. Phase 2 covers subfolders where content is ranking in positions 5 through 20, the ones closest to a meaningful ranking jump. Phase 3 covers low to zero-traffic subfolders. This sequencing lets you generate quick wins early while the larger work continues in the background.

Content Audit Tools for Building Your Inventory

Here is how the primary tools compare:

| Tool | Best For | Pricing |

|---|---|---|

| Screaming Frog | Full site crawl, technical data, URL export | Free up to 500 URLs; £259/year for unlimited |

| Ahrefs Site Audit | Combining crawl data with organic performance metrics | Paid; starts at $129/month |

| Google Search Console | Indexation status, search performance per URL | Free |

| Google Analytics | Finding pages with organic traffic that can slip through the cracks | Free |

| Semrush Site Audit | Technical audit with content quality flags | Paid; starts at $139.95/month |

| Sitebulb | Visual crawl reporting, great for client presentations | Paid; starts at $13.50/month |

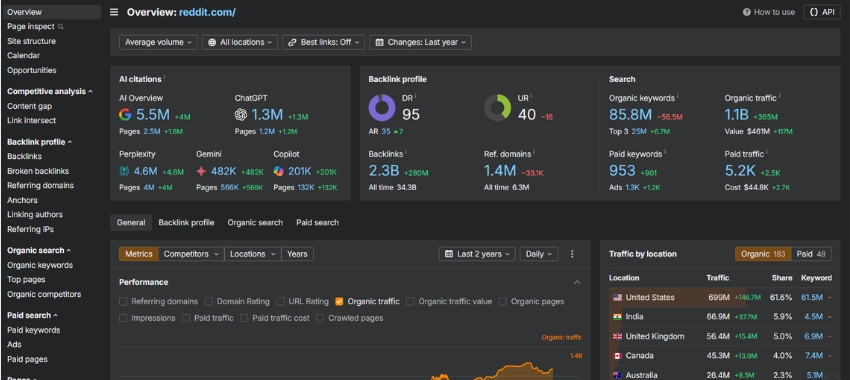

I use Ahrefs combined with Screaming Frog for almost every audit I run. The ability to pull crawl data, keyword rankings, traffic estimates, and backlink counts in one place saves significant time and gives me a more complete picture than any other single tool.

Step 3: Collect Performance Data (Quantitative Metrics)

Once your inventory is built, you pull the numbers. This is where you connect each URL to its actual performance, and start to see which pages are carrying weight and which ones are dead weight.

Pull data from three sources:

- Google Analytics 4: organic sessions, pageviews, average engagement time, bounce rate, and conversion rate per page.

- Google Search Console: impressions, clicks, average position, and which queries each page ranks for.

- Ahrefs: keyword rankings, estimated organic traffic, referring domains, and do-follow backlink count per URL.

- Semrush: keyword rankings, estimated organic traffic, referring domains, and do-follow backlink count per URL.

Here is the reference table I use on every audit:

| Metric | Source | What It Reveals |

|---|---|---|

| Organic sessions | GA4 | Actual traffic from search |

| Average engagement time | GA4 | Whether visitors are reading or bouncing |

| Conversion rate | GA4 | Whether the page drives business outcomes |

| Bounce rate | GA4 | Visitors who leave a website after viewing only one page, without interacting with the site |

| Average ranking position | GSC | Where you sit in search results |

| Impressions | GSC | Visibility without clicks, CTR opportunity |

| Referring domains | Ahrefs / Semrush | Authority and link equity attached to the page |

| Keyword rankings | Ahrefs / Semrush | Which terms the page ranks for and at what position |

For each page, evaluate against the following quantitative criteria:

- Organic Sessions: Pull this from GA4 using the Landing Page report filtered to Organic Search. Set your date range to the last six months. This is your baseline performance number, it tells you how much real search traffic the page is generating. A page with zero or near-zero organic sessions over six months is a red flag that demands a decision.

- Referring Domains: Pull this from Ahrefs. You are looking specifically at do-follow backlinks, the ones that pass link equity. A page with a strong backlink profile has earned authority that you do not want to throw away carelessly. This number is one of the most important factors in deciding whether to update, consolidate, or delete a page. Never remove a page with meaningful referring domains without a redirect in place.

- Keyword Rankings: In Ahrefs, pull the full keyword ranking breakdown per page. You want three numbers: total keywords the page ranks for, how many rank in the top 10, and how many rank in the top 3. A page ranking for 200 keywords, but with only two in the top 10, is a page with significant untapped potential; it just needs the right improvements to push those rankings over the line. A page ranking for three keywords total, with none in the top 20, is a different conversation entirely.

- Conversion Rate: Pull this from GA4 and compare each page against its peer group, blog posts against blog posts, landing pages against landing pages. Not every page on your site will have a meaningful conversion rate to measure, and that is fine. But for pages that are designed to drive action, demo requests, contact form submissions, content downloads, this number tells you whether the page is pulling its weight at the bottom of the funnel. A high-traffic page with a below-average conversion rate is not an SEO problem. It is a conversion problem.

- Average Ranking Position: Pull this from Google Search Console over the last six months. This metric tells you where your page sits in search results on average across all the queries it appears for. A page with an average position between 5 and 15 is in the most actionable range, close enough to meaningful traffic that targeted improvements can produce fast results. Pages sitting between positions 20 and 30 have a longer road ahead, but are worth evaluating for strategic value before you write them off.

- Click-Through Rate (CTR): Pull your clicks, impressions, average position, and CTR directly from Google Search Console’s performance report. CTR tells you how often someone sees your page in search results and actually clicks on it. The reason this metric matters so much is that a low CTR relative to your ranking position is almost always a title tag or meta description problem, not a rankings problem.

This quantitative data set is the backbone of your entire content audit. Everything else, the qualitative assessment, the action designations, the prioritization decisions, flows from what these numbers tell you first. Organic traffic shows you what’s working. Keyword positioning shows you what’s close. And the backlink profile of each URL tells you what you cannot afford to touch without a plan. Get these numbers right before you make a single decision.

Step 4: Evaluate Content Quality (Qualitative Assessment)

Quantitative data tells you how a page is performing. Qualitative assessment tells you why, and what to do about it.

For each page, evaluate against the following criteria:

- Accuracy: Is the information current and factually correct?

- Depth: Does the page genuinely answer the question, or does it skim the surface?

- Relevance: How closely does the page’s content align semantically with your target keyword?

- Originality: Does this page offer a perspective or insight that isn’t available elsewhere, or is it a repackaged version of what every other site already says?

- E-E-A-T signals: Does the page demonstrate first-hand experience, subject matter expertise, and authoritativeness? Is there an author bio? Are sources cited?

- Brand voice: Does the page sound like your brand, or does it read like it was produced by a different team in a different era?

- Readability: Is it easy to read and scan? Are headings used correctly? Are paragraphs short enough to hold attention?

- UX and accessibility: Does the page load quickly? Is it mobile-friendly? Are images properly tagged?

Google’s Helpful Content guidelines are worth keeping front of mind during this step. The core question Google is asking about every page is: was this written by someone with genuine expertise and first-hand experience, for the benefit of a real reader? If the answer is no, if it was written to rank rather than to genuinely help, it is a liability, not an asset.

Technical SEO Checks During Your Content Audit

Run these checks in parallel with your qualitative assessment, not as a separate project:

- Crawlability: Are target pages accessible to Googlebot? Check robots.txt and meta robots tags in Screaming Frog.

- Indexation: Cross-reference your crawl against Google Search Console’s coverage report. Pages you want indexed that aren’t indexed need immediate attention.

- Canonical tags: Are canonical tags pointing to the right URLs? Misconfigured canonicals are one of the most common silent ranking killers I find in audits.

- Redirect chains: Ahrefs flags these automatically. Chains longer than one hop dilute link equity and slow crawling.

- Broken internal links: Screaming Frog identifies these in every crawl. Fix them, they damage both user experience and crawl efficiency.

- Core Web Vitals: Pull these from Google Search Console’s Core Web Vitals report. Your Largest Contentful Paint should be under 2.5 seconds on every priority page.

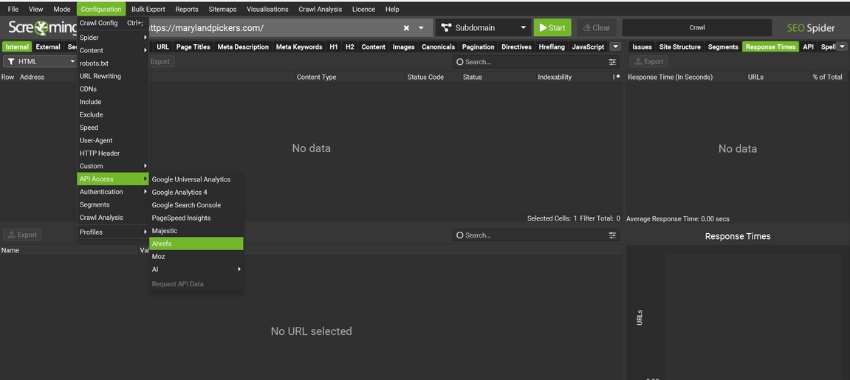

How to Connect Your Data Sources to Screaming Frog

Most people run a Screaming Frog crawl and then spend the next number of hours manually pulling data from GA4, Search Console, and Ahrefs into separate tabs, trying to reconcile everything with VLOOKUPs. There is a better way.

Screaming Frog has a built-in API configuration window that lets you connect all of your data sources before the crawl runs. When it’s done, every URL in your export already has performance data, ranking data, and backlink data sitting in the same row. It is one of the most underused features in the tool, and once you set it up, you will never go back to doing it the other way. (I promise, this will litterly save you hours of work!)

Here is how to get it connected.

Step 1: Open the API Access Configuration

Go to Configuration → API Access. You will see the full list of available integrations, Google Universal Analytics, Google Analytics 4, Google Search Console, PageSpeed Insights, Majestic, Ahrefs, Moz, and AI. Connect the ones you plan to use before you start your crawl. Do not run the crawl first and try to add them after. The data gets appended during the crawl, not retroactively.

Step 2: Connect Google Analytics 4

Select Google Analytics 4 and sign in with the Google account that has access to your property. Once you authorize the connection, select your GA4 property and choose which metrics you want pulled in. For a content audit, I pull sessions, engaged sessions, average engagement time, and conversions. Set the date range to the last six months before you run the crawl.

Note: Connect GA4 — not Google Universal Analytics. Universal Analytics was sunset in 2023 and the data is no longer being updated.

Step 3: Connect Google Search Console

Select Google Search Console and authorize with the same Google account. Pick your verified property and select the metrics you want appended, clicks, impressions, average position, and CTR. Having this data sit directly next to your crawl results means you can see ranking performance and traffic in the same row without touching a VLOOKUP.

Step 4: Connect Ahrefs

Select Ahrefs and enter your API key. You will find it inside Ahrefs under your account settings. Once it is connected, Screaming Frog will pull referring domains, URL Rating, inhanced keyword data, and backlink counts for every URL it crawls. This one is worth the extra step. Knowing which pages have do-follow backlinks pointing at them before you start making delete and consolidate decisions is not optional, it is the difference between a smart audit and an expensive mistake.

Note: Ahrefs API access requires a paid plan, you must Lite plan or higher. Ahrefs Lite is $129 a month.

Step 5: Connect Your AI Agent

Select AI from the API Access menu and enter your API key. From there, you define the prompts you want Screaming Frog to run against your content during the crawl. I use this to flag thin content, check topical alignment, and surface pages that are missing obvious E-E-A-T signals.

It is not a replacement for actually reading the pages. But across a site with hundreds of URLs, it cuts the initial triage time significantly.

What You End Up With

When the crawl finishes, every URL in your export has crawl data, GA4 metrics, GSC ranking data, and Ahrefs backlink data in a single row. That is your audit spreadsheet foundation, built in one pass, not stitched together from four separate exports.

I run every content audit this way. It saves hours and removes a lot of the manual error that comes from trying to reconcile data across multiple tools after the fact.

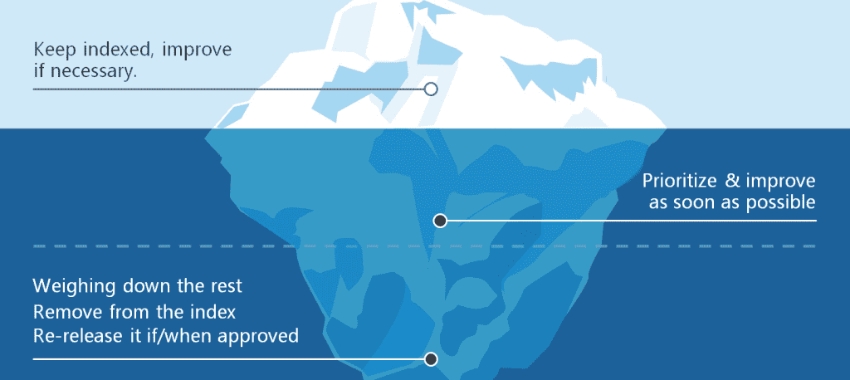

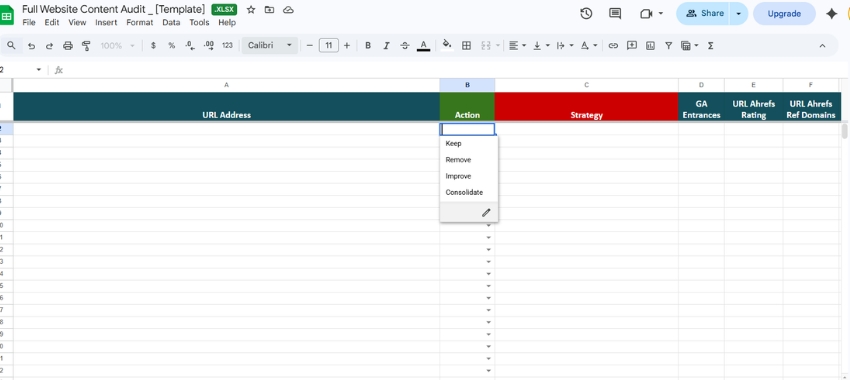

Step 5: Apply the Keep, Update, Consolidate, or Remove Framework

I follow a simple four-tier rating system for each criterion: Keep, Update, Consolidate, or Remove. When you score every page across the quantitative and qualitative criteria we covered, patterns emerge fast. You will see entire content categories that are consistently thin, or a blog post that hasn’t been updated since 2021 and reads like it.

This is where the audit becomes an action plan. Every indexable URL in your inventory gets one of four designations:

Keep

The page has strong traffic, solid rankings, good engagement, a strong backlink profile, and meets your quality standards. Leave it alone. At most, schedule a review in six months.

Update

The page has a decent backlink profile and ranking potential, but is underperforming. Traffic is declining, content is outdated, or the page is not fully aligned to current search intent. This is your largest category in most audits, and the highest-opportunity one. A well-executed update on a page already ranking in position 4 to 11 can produce quick and meaningful traffic increases within 60 to 90 days.

Consolidate

If two or more pages are covering the same topic and competing against each other, thats Keyword cannibalization. Pick the strongest page as the primary asset, consolidate the other pages into the most authoritative page, then 301 redirect the consolidated URLs into the promary asset. Keyword cannibalization is more common than most teams realize. I find it on almost every site I audit using Ahrefs’ keyword overlap reports.

Remove

If the page has zero organic traffic, no backlinks, no strategic value, and cannot be meaningfully improved. Remove it and implement a 301 redirect to the most relevant page on your site. Note: Never delete a page with backlinks without redirecting it. You will lose the link equity permanently. Sometimes you’re just not sure if you should remove a page or not. In that case, just no-index it. You could always remove it later.

Here is a simple content audit strategy for common scenarios flowchart to guide the process:

A quick note on attribution: this decision framework was originally developed by the team at Inflow, one of the sharpest content strategy frameworks I came across earlier in my career. They have since removed it from their site, but the core logic holds up. What you will find here is that original framework, updated to account for where search is today, including GenAI, AI Overviews, and LLM visibility considerations that did not exist when Inflow first built this.

Small Site

Heavy emphasis on Keyword Research, Content Quality, and building GEO-ready content from the start.

Medium Site

Balance between updating existing content, pruning low-value pages, and structuring content for AI extractability.

Large Site

Heavy emphasis on prioritization, cannibalization cleanup, and phased AI search optimization across content clusters.

Extra Large Site

Focus on pruning at scale, crawl budget management, and programmatic GEO implementation across thousands of pages.

With fewer than 100 pages, every piece of content is auditable in full. Review each page against the Keep / Update / Consolidate / Remove framework. Prioritize depth and E-E-A-T signals — author bios, original data, first-hand experience.

Build a keyword matrix mapping every page to its primary and secondary targets. Run a content gap analysis to identify missing topics.

GenAI & LLM AngleStructure every priority page with chunk-sized sections (100–300 words) that answer discrete questions. Add FAQ blocks with schema markup. Run the LLM Prompt Test: query ChatGPT, Gemini, and Perplexity with your target questions and check if your content is cited. Fix gaps immediately — at this scale, you can do it page by page.

Treat the site as scalable but still manageable. Propose how many pages can be updated per month for ongoing optimization. Use Ahrefs keyword overlap reports to identify early cannibalization before it compounds.

Prioritize pages ranking in positions 5–20 for content updates — these represent the fastest path to traffic gains. Include a content gap analysis if time permits.

GenAI & LLM AngleBatch-implement FAQ schema, HowTo schema, and comparison tables across your top-performing content clusters. AI platforms extract structured data more reliably than narrative prose. Audit your top 50 pages for AI extractability using the LLM Prompt Test and prioritize rewrites accordingly.

At this scale, auditing everything at once is impractical. Use the phased approach: Phase 1 covers highest-traffic subfolders, Phase 2 covers pages in positions 5–20, Phase 3 covers zero-traffic subfolders.

Cannibalization is likely widespread. Run Ahrefs keyword overlap reports across content clusters to find competing pages, then consolidate aggressively with 301 redirects.

GenAI & LLM AngleImplement GEO optimizations at the content cluster level, not page by page. Identify which topic clusters are already being cited by AI platforms and double down. Add semantic HTML hierarchy, authoritative outbound citations, and chunk-based formatting as a template standard across the cluster.

Sites with 10,000+ pages almost always have significant content bloat. The primary focus is reducing the ratio of low-quality to high-quality indexed pages. Identify entire subfolders or page types (tag pages, thin category pages, outdated archives) that can be noindexed or removed in bulk.

Prioritize pages with the highest traffic and backlink profiles for updates. Use the impact-effort matrix rigorously — you cannot touch everything.

GenAI & LLM AngleAt this scale, GEO implementation must be programmatic. Build FAQ blocks, comparison tables, and schema markup into your CMS templates so every new and updated page inherits AI-extractable formatting automatically. Use Screaming Frog’s AI agent integration to flag pages missing GEO signals in bulk during the crawl.

At this size, a full audit is feasible. Apply the “Improve It or Remove It” mantra to every page. Cross-reference traffic in GA4 with ranking data in GSC to identify pages that lost visibility after the update.

Focus on strengthening E-E-A-T signals: add author bios, cite sources, include original data and first-hand experience. Google’s Helpful Content guidelines are the lens for every decision.

GenAI & LLM AngleAlgorithm updates increasingly align with how LLMs evaluate content quality. Pages flagged as “unhelpful” by Google are also unlikely to be cited by ChatGPT or Perplexity. Treat the update recovery and GEO optimization as the same project — depth, accuracy, and originality improve both simultaneously.

Follow the “Improve or Remove” framework aggressively. Identify thin content, doorway pages, duplicate topics, and outdated posts. Prioritize pages with backlinks for improvement; noindex pages without backlinks that cannot be rewritten within the quarter.

A good starting point is pages with relatively high referring domains but declining traffic — these have authority worth preserving.

GenAI & LLM AngleLLMs favor content with authoritative citations, specific data points, and clear expertise signals. As you rewrite declining pages, embed these signals intentionally. Add comparison tables and structured FAQ blocks — these serve both traditional SEO recovery and AI extractability.

At this scale, a traffic decline often means entire content clusters are underperforming, not just individual pages. Identify which subfolders or topic areas took the biggest hit and prioritize those for the audit.

Consolidate cannibalized pages aggressively — pick the strongest URL per topic, merge content, and 301 redirect the rest. Prioritize based on Ahrefs Copyscape risk scores and referring domain counts.

GenAI & LLM AngleRun the LLM Prompt Test across your highest-value topic clusters. If AI platforms are citing competitors instead of you for queries you used to own in traditional search, your content likely lacks the structured, extractable formatting LLMs need. Triage the top 20% of pages by strategic value for GEO reformatting.

The primary goal is dramatically reducing the number of indexed low-quality pages. Consider noindexing or removing entire sections, page types, or subfolders that would be considered thin, duplicate, or overlapping content.

Rewrite as many high-value pages as the budget allows. For pages that cannot be rewritten this quarter, noindex them until they can be improved — don’t leave known low-quality content in the index.

GenAI & LLM AngleAt 10,000+ pages, prioritize programmatic fixes. Update CMS templates to auto-generate FAQ schema, semantic heading structures, and chunk-friendly formatting. This single change can improve GEO readiness across thousands of pages without manual per-page work. Track AI citation frequency as a KPI alongside organic traffic recovery.

With a small site, you can run the LLM Prompt Test on every page: query ChatGPT, Gemini, and Perplexity with the questions your content answers and check if you’re cited. Track which competitors are cited instead.

Reformat every priority page with chunk-sized sections, FAQ blocks, comparison tables, and proper schema markup (FAQ, HowTo, Article).

GenAI & LLM AngleAdd first-hand experience signals — original data, specific examples, expert commentary — to every page. LLMs are trained to favor content demonstrating genuine expertise. Link out to credible sources to signal awareness of the broader knowledge landscape. Measure progress by re-running prompt tests monthly.

Audit your top 50 pages by organic traffic for AI extractability. These are the pages most likely to be surfaced by LLMs — if they’re not structured correctly, you’re leaving citations on the table.

Deploy FAQ schema, Article schema, and HowTo schema across all qualifying pages. Build a structured content template that future content follows by default.

GenAI & LLM AngleAI Overviews and ChatGPT responses pull from pages that make extraction easy. Convert long narrative paragraphs into discrete, self-contained answer blocks of 100–300 words. Add comparison tables wherever options are discussed. Monitor AI Overview citation frequency as a quarterly KPI.

Identify which topic clusters should be generating AI citations based on your organic rankings. If you rank in the top 10 for a topic but aren’t cited by LLMs, the problem is almost always content structure, not authority.

Run a competitive citation analysis: who IS getting cited for your target queries? Reverse-engineer their formatting patterns.

GenAI & LLM AngleRoll out GEO formatting at the cluster level. Create a standard reformatting playbook: heading hierarchy, FAQ blocks, comparison tables, chunk-sized sections, authoritative outbound links. Apply it systematically across your top 3–5 clusters first, measure citation lift, then expand to the next tier.

Manual page-by-page GEO optimization is not viable at 10,000+ pages. The solution is CMS-level template changes that make every page AI-extractable by default.

Build FAQ blocks, structured data, and chunk-friendly layouts into your templates. Use Screaming Frog’s AI agent to audit GEO readiness in bulk and flag pages that deviate from the template standard.

GenAI & LLM AngleEstablish AI citation tracking as a formal KPI alongside organic traffic. Monitor which pages and topics get cited in AI Overviews, ChatGPT, and Perplexity on a monthly cadence. Feed this data back into your quarterly audit cycle to continuously improve GEO performance. At this scale, the compounding effect of template-level GEO implementation is significant — measure it at 90 and 180 days.

How to Audit Your Content for AI Search and GEO Visibility

This is the section most content audit guides do not cover. It is also where I see the biggest gap between what brands are doing and what search actually requires right now.

AI Overviews, ChatGPT, Perplexity, and Gemini do not rank pages the way Google does. They extract information from content and synthesize answers. If your content is not structured in a way that makes extraction easy, you will not be cited, even if you rank well in traditional search.

Here is what to check on every priority page:

- Chunk-sized sections: AI platforms pull discrete, self-contained answers. Structure your content in sections of 100 to 300 words that each answer a specific question clearly. Long, unbroken walls of text are hard to extract from.

- FAQ blocks: Explicit question-and-answer formatting is one of the most reliable ways to get cited in AI responses. If your page answers common questions, format them as FAQs.

- Comparison tables: AI platforms love structured data. If you are comparing options, use a table, not a paragraph.

- Schema markup: Implement FAQ schema, HowTo schema, and Article schema where appropriate. This makes your content machine-readable in a way that increases extractability.

- Semantic HTML: Use proper heading hierarchy (H1, H2, H3). AI systems use heading structure to understand content organization.

- Authoritative citations: Link out to credible sources. AI platforms are trained to favor content that demonstrates awareness of the broader knowledge landscape.

- First-hand experience signals: Include specific data points, original insights, and real examples. Generic content does not get cited.

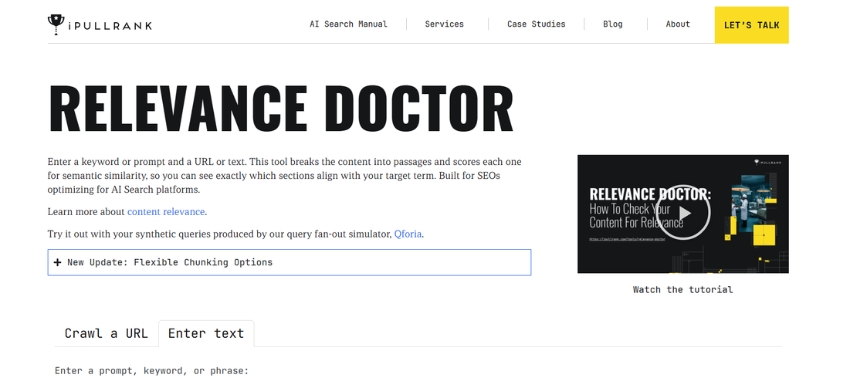

The LLM prompt test: This is an audit step I run on every client engagement now. Open ChatGPT, Gemini, and Perplexity. Ask each platform the exact questions your content is designed to answer. Check whether your brand or content gets cited in the response. If it doesn’t, you have a GEO gap, and now you know exactly which pages to prioritize for AI search optimization.

The Post-Audit Playbook: Prioritize, Execute, and Measure Results

Most guides stop at the action plan. This is where the real work starts. If you have completed your audit and need a senior-level strategist to help you execute and measure the results, take a look at how I work with clients. For those executing in-house, here is how to prioritize and sequence the work.

Prioritizing Findings with an Impact-Effort Matrix

Not everything in your audit is equal. Use a 2×4 impact-effort matrix to sequence the work:

| Low Effort | High Effort | |

|---|---|---|

| High Impact | Q1: Quick wins — do these first | Q2: Strategic projects — schedule and resource |

| Low Impact | Q3: Batch these — do them when capacity allows | Q4: Skip these — not worth the investment |

Quick wins, title tag rewrites, internal link additions, FAQ block insertions, schema markups, go into weeks one and two. Major content rewrites and page consolidations go into months one through three. This sequencing keeps momentum up while the heavier work progresses.

Recommended Execution Timeline

- Weeks 1–2: Quick wins. Title tags, meta descriptions, internal links, schema markup, FAQ blocks.

- Month 1–2: Medium projects. Content updates on pages ranking in positions 5 to 20, consolidation of cannibalized pages, redirect implementation.

- Month 2–3: Major rewrites. Full overhauls of underperforming pages with high strategic value.

- Ongoing: 30/60/90 day monitoring of ranking and traffic changes across updated pages.

Measuring Audit Impact: KPIs and Reporting

Track these metrics against your pre-audit baseline:

- Organic sessions: overall and by content category

- Average ranking position for target keywords

- Zero-traffic page count: this should decrease over time

- Conversion rate from organic traffic

- AI Overview and ChatGPT citation frequency for priority topics

One important note on timing: SEO results from a content audit take three to four months to fully materialize. Set that expectation with stakeholders upfront. What you will see in the first 30 days are early ranking movements on updated pages. The compounding effect shows up at the 90 to 120 day mark.

Common Content Audit Mistakes (and How to Avoid Them)

I have run content audits across hundreds of sites over 15 years of SEO work, across enterprise organizations, SaaS companies, universities, and e-commerce brands. These are the mistakes I see most consistently.

- Auditing without clear goals: If you don’t know what success looks like before you start, you will produce a spreadsheet nobody acts on. Define your goals in Step 1 and tie them to business outcomes.

- Collecting too much data: More data is not better data. Focus on the metrics that directly connect to your audit goals. Pulling 40 columns of data per URL sounds thorough, it mostly produces analysis paralysis.

- Not executing the plan: This is the most common and most costly mistake. An audit that produces a prioritized action plan and then sits in a Google Drive folder is a waste of everyone’s time. Build execution into the project plan from day one.

- Ignoring AI search: If your audit does not account for AI Overview visibility, ChatGPT citations, and GEO signals, you are auditing for 2022, not 2025. Add the GEO checklist to every priority page evaluation.

- Treating it as a one-time project: A content audit is not a one-and-done exercise. Search evolves, buyer intent shifts, and your content library grows. Quarterly audits, even lightweight ones, keep your site healthy and your strategy current.

- Deleting pages with backlinks without redirecting them: I cannot stress this enough. Every page you delete without a 301 redirect is link equity you are throwing away. Always check Ahrefs for referring domains before you remove anything.